“One of the things I like about SwordSTEM is that you don’t have an inherent publication bias. It seems like even if your conclusion is ‘Well, this shows nothing’, you still publish it.”

Lots of people say nice things about SwordSTEM, but this is one of the comments I’ve appreciated most. In academic publishing there is a significant issue known as the Positive Publication Bias, which is a very easy trap to fall into. Here’s how it works:

1) Some budding anonymous researcher has a question, and forms a hypothesis.

2) The hypothesis is tested for truth (this is where the science happens).

Photo of me working on stats for SwordSTEM

3) Depending on the results of 2) we can either end up at:

3a) There turns out to be a relationship between the two. Either being significant in supporting the hypothesis, or it could be even more exciting and the results show the exact opposite. These interesting results are submitted to a journal for publication. People organizing tournaments or scheduling practices can use this information however they want.

or

3b) It turns out there is no relationship between the match length and shallow targeting. This is not a very interesting statement on it’s own, and no academic articles want to take up space publishing this ‘dead end’ research.

This publication bias towards positive results leads us to a very skewed understanding of the world. First of all by not publishing the negative result, the body of knowledge about tournament stats is not expanded. Maybe an event organizer wanted to know if that was actually the case and now has to guess. Maybe* someone just makes something up out of nowhere and it becomes common knowledge.

Even worse is that it biases what research is performed. No one ever investigates anything that they don’t expect to find a significant finding, leaving whole areas of study neglected. And this is the Positive Publication Bias.

*Okay, almost for certain this is what happens.

SwordSTEM – Times I Was Good

Usually I write my articles in a narrative tone, explaining my findings as I go along. This is because I am literally writing as I am going through doing my findings I am a great writer and storyteller. And as a result the negative results flow smoothly into the narrative.

An example of some of the negative results (aka those which failed to find a relationship) I have published in the past:

- In Nordic Rules tournaments the number of hits to each target area looks mostly the same if you use 1&2 points as if you use 2&3 points for your different target areas. Full Afterblow Smackdown: 1&2 vs 2&3

- There isn’t a correlation between going for deeper (and thus higher valued targets) and winning more matches. Likewise, keeping to shallow targets doesn’t help you win either. Is Going For Deep Targets Worth It and More – Longpoint Stats Review

- There was no appreciable difference between Beginner and Advanced LS tournaments in terms of scoring exchanges. (Of course how they score is going to be pretty different. but I don’t have a number to quantify that; therefore it doesn’t exist.😉) How Much Does Participant Skill Affect Overall Behavior

- There isn’t a case of brackets being cleaner once the less skilled fencers are knocked out. If anything it is a case of the finals being slightly less clean, but close enough that it isn’t all that significant. Survive the Pools and Fence the Finals?

SwordSTEM – Times I Was Bad

It may shock you, but I’m not perfect. There have been occasions where I thought I might have something interesting, couldn’t get anything out of it, and dropped it for something else. This is a case of Positive Publication Bias at work. So time to revisit some of these.

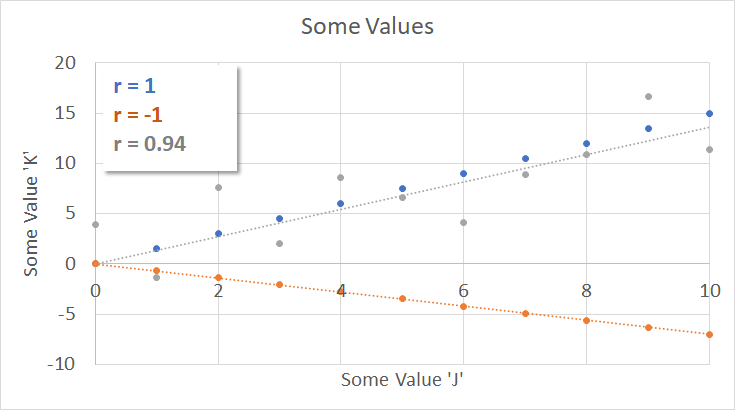

The correlation coefficient (r) is the primary tool I’ll be using to illustrate the relationship between two things, or more specifically the lack of a relationship. The further the value is from 0, the more things appear to be related, up to -1 or 1. (Once you know that they are related you start to look for a link. Just because they are related doesn’t mean that one caused the other!)

The plot below shows three different data series, and the correlation coefficients between the two variables tracked. For the blue (r = 1) this means an increase in J maps to a proportional (but not identical!) increase in K. For the orange data (r = -1) this means that an increase in J corresponds to a decrease in K every time.

For the gray we see that it is close to being perfectly related, but not completely – a.k.a. “highly correlated”. There is some noise tucked in here, but overall an increase in J correlates pretty strongly with an increase in K, hence the high correlation coefficient of 0.94. (You can think of this like the correlation between landing a hit and scoring points. Most of the time they are related, but with the judges in the mix…)

Double Hits Are Related To?

Back in the early days of HEMA Scorecard, before SwordSTEM was even a thing, I tried to look for patterns between different statistics. And there basically weren’t any so I abandoned that and stopped looking. And in later years when I was looking for interesting topics for SwordSTEM I naturally didn’t choose this for an article topic because it is boring. Bad Sean!

The following is data collected from deductive afterblow rules which also tracked double hits.

| Correlation to Double Hit % | What is it? | |

|---|---|---|

| Clean Hit % | -0.04 | How often you land clean hits on them, vs how often they land clean hits on you |

| Overall Hit % | -0.02 | How often you land the original hit on them vs how often they land the original hit on you |

| Afterblow Defense % | -0.08 | How often you successfully defend the afterblow of your initial hit |

| Afterblow Return % | 0.01 | How often do you land the afterblow after being hit |

If you wanted an example of unrelated data for a textbook, this would be it. Those values say that there is no connection between any of these things. Knowing that someone frequently double hits doesn’t tell you anything about their ability to land more hits than their opponent, defend against the afterblow, or land afterblows.

Afterblows Are Related To ?

Using the same data set from the previous section, we can still do more. When you have a bunch of data points you can throw them all in a blender, and have the computer tell you what the correlation coefficients are between all the terms. Here is an output for four different properties:

Clean Hit % (how often you land clean hits on them, vs how often they land clean hits on you) is very strongly related to Overall Hit % (how often you land the original hit on them vs how often they land the original hit on you). Which is not something anyone is going to find surprising. These two numbers are also the most correlated to Win %, and at this point are a pretty good proxy for a fighter’s ability.

Let us turn our attention to the relationship between Afterblow Defense % and Afterblow Return %.

The value is -0.2, which is really low. It is basically saying that knowing someone is good at defending afterblows is no help in knowing if they are good at landing an afterblow. (Of course, in general, whether the afterblow lands or not is dependent on what the person being hit is doing before the afterblow lands, not what either of them do after. Doubles per Exchange and the Afterblow)

The relationship between the overall hit percentage and the afterblow percentages is also basically non-existent. Which is actually really interesting as it suggests that how good a fighter you are has no bearing on how good you are at defending or landing afterblows. This is a perfect example of finding no relationship nonetheless it results in an interesting bit of knowledge.

Are the Number of Doubles Connected to Closeness?

We’ve seen in the past that having high stakes will cause people to be more willing to trade hits to win. (Do they Double a Lot in the Swordfish Finals?, Full Afterblow Smackdown: 1&2 vs 2&3) But being on the biggest HEMA livestream in the world is a bit of an extreme case to study. Is the rule generally applicable?

I looked at the Polish scene for a good case study, as they call double hits but have no limit. Which means you see a lot of high double hit matches, giving a more continuous data set. The operative word here is more, as doubles and point values are still given out in discrete points. When you look at it on a scatter plot it looks very artificial, and that’s because it is.

We have lots of points stacking on top of each other, and you can’t get much from the graph. I’m going to cheat a little bit and use R2 here, instead of “r” that we have been using for correlation coefficient. Mainly because that is how excel puts it on the plot. And it doesn’t really matter if you care about r or R2, a value of only 0.0815 is abysmal for either. Meaning there isn’t really a relationship between how close the match score is and the number of double hits which were landed.

Wrapping Up

So, in addition to the boring stats lesson shoved down your throats, we did manage to learn something by publishing results which ended up with a whole lot of nothing. And that is:

- Double hits are basically not correlated with anything.

- Throwing and defending afterblows are independent skills in deductive afterblow rules.

- Being good at fencing has little bearing on how often you defend or throw afterblows in a deductive afterblow ruleset.

- In the Polish HEMA scene there is no connection between the number of doubles and how close a match is. It remains to be seen how well this result holds up in other regions.

- I should work on being more interesting as a writer, so I can make even a negative result engaging to read. Then I need not fear publication of negative data.